W

WIn algorithmic information theory, the Kolmogorov complexity of an object, such as a piece of text, is the length of a shortest computer program that produces the object as output. It is a measure of the computational resources needed to specify the object, and is also known as algorithmic complexity, Solomonoff–Kolmogorov–Chaitin complexity, program-size complexity, descriptive complexity, or algorithmic entropy. It is named after Andrey Kolmogorov, who first published on the subject in 1963.

W

WA communication channel refers either to a physical transmission medium such as a wire, or to a logical connection over a multiplexed medium such as a radio channel in telecommunications and computer networking. A channel is used to convey an information signal, for example a digital bit stream, from one or several senders to one or several receivers. A channel has a certain capacity for transmitting information, often measured by its bandwidth in Hz or its data rate in bits per second.

W

WIn telecommunication and information theory, the code rate of a forward error correction code is the proportion of the data-stream that is useful (non-redundant). That is, if the code rate is for every bits of useful information, the coder generates a total of bits of data, of which are redundant.

W

WIn information theory, the conditional entropy quantifies the amount of information needed to describe the outcome of a random variable given that the value of another random variable is known. Here, information is measured in shannons, nats, or hartleys. The entropy of conditioned on is written as .

W

WIn probability theory, particularly information theory, the conditional mutual information is, in its most basic form, the expected value of the mutual information of two random variables given the value of a third.

W

WCycles of Time: An Extraordinary New View of the Universe is a science book by mathematical physicist Roger Penrose published by The Bodley Head in 2010. The book outlines Penrose's Conformal Cyclic Cosmology (CCC) model, which is an extension of general relativity but opposed to the widely supported multidimensional string theories and cosmological inflation following the Big Bang.

W

WIn information theory, the entropy of a random variable is the average level of "information", "surprise", or "uncertainty" inherent in the variable's possible outcomes. The concept of information entropy was introduced by Claude Shannon in his 1948 paper "A Mathematical Theory of Communication", and is sometimes called Shannon entropy in his honour. As an example, consider a biased coin with probability p of landing on heads and probability 1-p of landing on tails. The maximum surprise is for p = 1/2, when there is no reason to expect one outcome over another, and in this case a coin flip has an entropy of one bit. The minimum surprise is when p = 0 or p = 1, when the event is known and the entropy is zero bits. Other values of p give different entropies between zero and one bits.

W

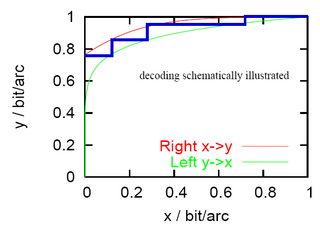

WAn extrinsic information transfer chart, commonly called an EXIT chart, is a technique to aid the construction of good iteratively-decoded error-correcting codes.

W

WThe generalized entropy index has been proposed as a measure of income inequality in a population. It is derived from information theory as a measure of redundancy in data. In information theory a measure of redundancy can be interpreted as non-randomness or data compression; thus this interpretation also applies to this index. In additional interpretation of the index is as biodiversity as entropy has also been proposed as a measure of diversity.

W

WIn information theory, Gibbs' inequality is a statement about the information entropy of a discrete probability distribution. Several other bounds on the entropy of probability distributions are derived from Gibbs' inequality, including Fano's inequality. It was first presented by J. Willard Gibbs in the 19th century.

W

WGrammatical Man: Information, Entropy, Language, and Life is a 1982 book written by the Evening Standard's Washington correspondent, Jeremy Campbell. The book touches on topics of probability, Information Theory, cybernetics, genetics and linguistics. The book frames and examines existence, from the Big Bang to DNA to human communication to artificial intelligence, in terms of information processes. The text consists of a foreword, twenty-one chapters, and an afterword. It is divided into four parts: Establishing the Theory of Information; Nature as an Information Process; Coding Language, Coding Life; How the Brain Puts It All Together.

W

WEntropic gravity, also known as emergent gravity, is a theory in modern physics that describes gravity as an entropic force—a force with macro-scale homogeneity but which is subject to quantum-level disorder—and not a fundamental interaction. The theory, based on string theory, black hole physics, and quantum information theory, describes gravity as an emergent phenomenon that springs from the quantum entanglement of small bits of spacetime information. As such, entropic gravity is said to abide by the second law of thermodynamics under which the entropy of a physical system tends to increase over time.

W

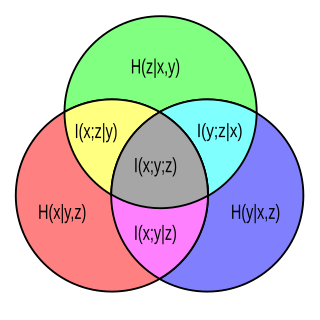

WAn information diagram is a type of Venn diagram used in information theory to illustrate relationships among Shannon's basic measures of information: entropy, joint entropy, conditional entropy and mutual information. Information diagrams are a useful pedagogical tool for teaching and learning about these basic measures of information, but using such diagrams carries some non-trivial implications. For example, Shannon's entropy in the context of an information diagram must be taken as a signed measure.. Information diagrams have also been applied to specific problems such as for displaying the information theoretic similarity between sets of ontological terms.

W

WIntegrated information theory (IIT) attempts to explain what consciousness is and why it might be associated with certain physical systems. Given any such system, the theory predicts whether that system is conscious, to what degree it is conscious, and what particular experience it is having. According to IIT, a system's consciousness is determined by its causal properties and is therefore an intrinsic, fundamental property of any physical system.

W

WRoman Jakobson defined six functions of language, according to which an effective act of verbal communication can be described. Each of the functions has an associated factor. For this work, Jakobson was influenced by Karl Bühler's organon model, to which he added the poetic, phatic and metalingual functions.

W

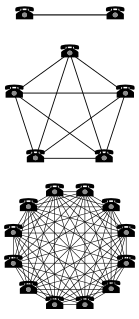

WMetcalfe's law states that the value of a telecommunications network is proportional to the square of the number of connected users of the system (n2). First formulated in this form by George Gilder in 1993, and attributed to Robert Metcalfe in regard to Ethernet, Metcalfe's law was originally presented, c. 1980, not in terms of users, but rather of "compatible communicating devices". Only later with the globalization of the Internet did this law carry over to users and networks as its original intent was to describe Ethernet connections.

W

WIn radio, multiple-input and multiple-output, or MIMO, is a method for multiplying the capacity of a radio link using multiple transmission and receiving antennas to exploit multipath propagation. MIMO has become an essential element of wireless communication standards including IEEE 802.11n (Wi-Fi), IEEE 802.11ac (Wi-Fi), HSPA+ (3G), WiMAX, and Long Term Evolution (LTE). More recently, MIMO has been applied to power-line communication for three-wire installations as part of the ITU G.hn standard and of the HomePlug AV2 specification.

W

WIn probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual dependence between the two variables. More specifically, it quantifies the "amount of information" obtained about one random variable through observing the other random variable. The concept of mutual information is intimately linked to that of entropy of a random variable, a fundamental notion in information theory that quantifies the expected "amount of information" held in a random variable.

W

WThe Nyquist–Shannon sampling theorem is a theorem in the field of signal processing which serves as a fundamental bridge between continuous-time signals and discrete-time signals. It establishes a sufficient condition for a sample rate that permits a discrete sequence of samples to capture all the information from a continuous-time signal of finite bandwidth.

W

WHarry Nyquist was a Swedish-American electronic engineer who made important contributions to communication theory.

W

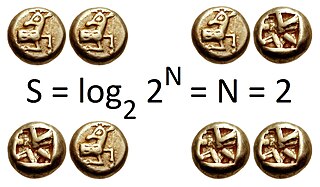

WThe mathematical theory of information is based on probability theory and statistics, and measures information with several quantities of information. The choice of logarithmic base in the following formulae determines the unit of information entropy that is used. The most common unit of information is the bit, based on the binary logarithm. Other units include the nat, based on the natural logarithm, and the hartley, based on the base 10 or common logarithm.

W

WRandom number generation is a process which, often by means of a random number generator (RNG), generates a sequence of numbers or symbols that cannot be reasonably predicted better than by a random chance. Random number generators can be truly random hardware random-number generators (HRNGS), which generate random numbers as a function of current value of some physical environment attribute that is constantly changing in a manner that is practically impossible to model, or pseudorandom number generators (PRNGS), which generate numbers that look random, but are actually deterministic, and can be reproduced if the state of the PRNG is known.

W

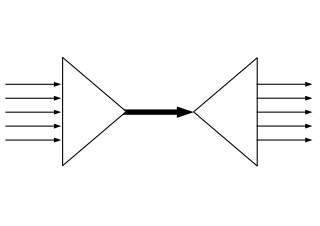

WThe Shannon–Weaver model of communication has been called the "mother of all models." Social Scientists use the term to refer to an integrated model of the concepts of information source, message, transmitter, signal, channel, noise, receiver, information destination, probability of error, encoding, decoding, information rate, channel capacity. However, some consider the name to be misleading, asserting that the most significant ideas were developed by Shannon alone.

W

WSilq is a new high-level programming language for quantum computing with a strong static type system and support for safe uncomputation, developed at ETH Zürich.

W

WSpatial multiplexing or space-division multiplexing is a multiplexing technique in MIMO wireless communication, fibre-optic communication and other communications technologies used to transmit independent channels separated in space. Other multiplexing techniques include FDM, TDM or PDM.

W

WSpatialtemporal patterns are patterns that occur in a wide range of natural phenoma and are characterized by a spatial and a temporal patterning. The general rules of pattern formation hold. In contrast to "static", pure spatial patterns, the full complexity of spatiotemporal patterns can only be recognized over time. Any kind of traveling wave is a good example of a spatiotemporal pattern. Besides the shape and amplitude of the wave, its time-varying position in space is an essential part of the entire pattern.

W

WSzemerédi's regularity lemma is one of the most powerful tools in extremal graph theory, particularly in the study of large dense graphs. It states that the vertices of every large enough graph can be partitioned into a bounded number of parts so that the edges between different parts behave almost randomly.

W

WIn coding theory and information theory, a Z-channel is a communications channel used to model the behaviour of some data storage systems.

W

WZero suppression is the removal of redundant zeroes from a number. This can be done for storage, page or display space constraints or formatting reasons, such as making a letter more legible.